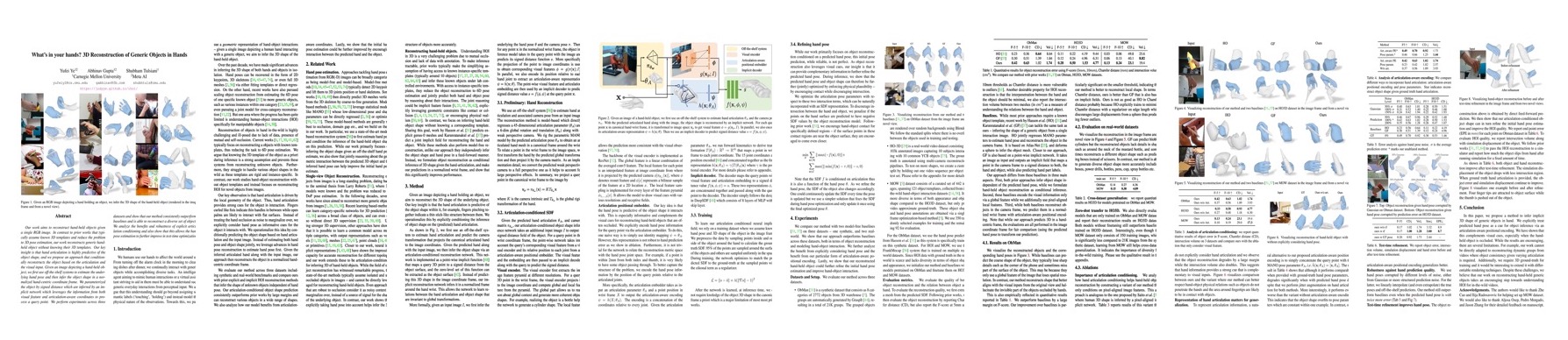

Our work aims to reconstruct hand-held objects given a single RGB image. In contrast to prior works that typically assume known 3D templates and reduce the problem to 3D pose estimation, our work reconstructs generic hand-held object without knowing their 3D templates. Our key insight is that hand articulation is highly predictive of the object shape, and we propose an approach that conditionally reconstructs the object based on the articulation and the visual input. Given an image depicting a hand-held object, we first use off-the-shelf systems to estimate the underlying hand pose and then infer the object shape in a normalized hand-centric coordinate frame. We parameterized the object by signed distance which are inferred by an implicit network which leverages the information from both visual feature and articulation-aware coordinates to process a query point. We perform experiments across three datasets and show that our method consistently outperforms baselines and is able to reconstruct a diverse set of objects. We analyze the benefits and robustness of explicit articulation conditioning and also show that this allows the hand pose estimation to further improve in test-time optimization.

Video

Comparison with Baselines

Our approach differs from two baselines (HO[Hasson et al., 19], GF[Karunratanakul et al. 20]) in three main aspects:

1) We formulate hand-held object reconstruction as conditional inference.

2) We additionally use pixel-aligned local features.

3) We predict object in a normalized wrist frame with articulation-aware positional encoding.

Zero-shot cross-dataset generalization

We directly evaluate models that are only trained on ObMan and MOW datasets and report their reconstruction results on HO3D dataset. Both models without finetuning still outperform baselines trained on HO3D dataset. Interestingly, even though the MOW dataset only consists of 350 training images, which is significantly less compared to 21K images from the synthetic dataset, learning from MOW still helps cross-dataset generalization. It indicates the importance of diversity for in-the-wild training.

Ablations on Hand Articulation

We ablate with or without explicitly considering hand pose. The comparison suggests that hand information provides complementary cue to visual inputs to alleviate depth ambguity.

Test-Time Refinement

At test time, we optimize the articulation pose parameters to encourage contact and to discourage intersection, which can be naturally incorporated with an SDF representation. The test-time optimization increases physical plausibility.